Product Development

Chinmay Chandgude

AI in Healthcare Product Development: What Healthtech Teams Are Actually Building in 2026

In the first half of 2025, AI-enabled healthcare startups captured 62% of all digital health venture funding in the U.S. raising an average of $34.4 million per round, an 83% premium over non-AI peers. Nine of the eleven mega-deals (over $100 million) in that period went to AI-enabled companies, according to Rock Health's H1 2025 digital health funding report. The global AI in healthcare market is valued at $51.2 billion in 2026, forecast to reach $613 billion by 2034, according to Precedence Research.

Those numbers tell you the market is real. What they do not tell you is where the actual product decisions are happening which AI capabilities healthtech teams are building from scratch, which they are buying from platform providers, and which are proving harder to deploy than the investor decks suggested.

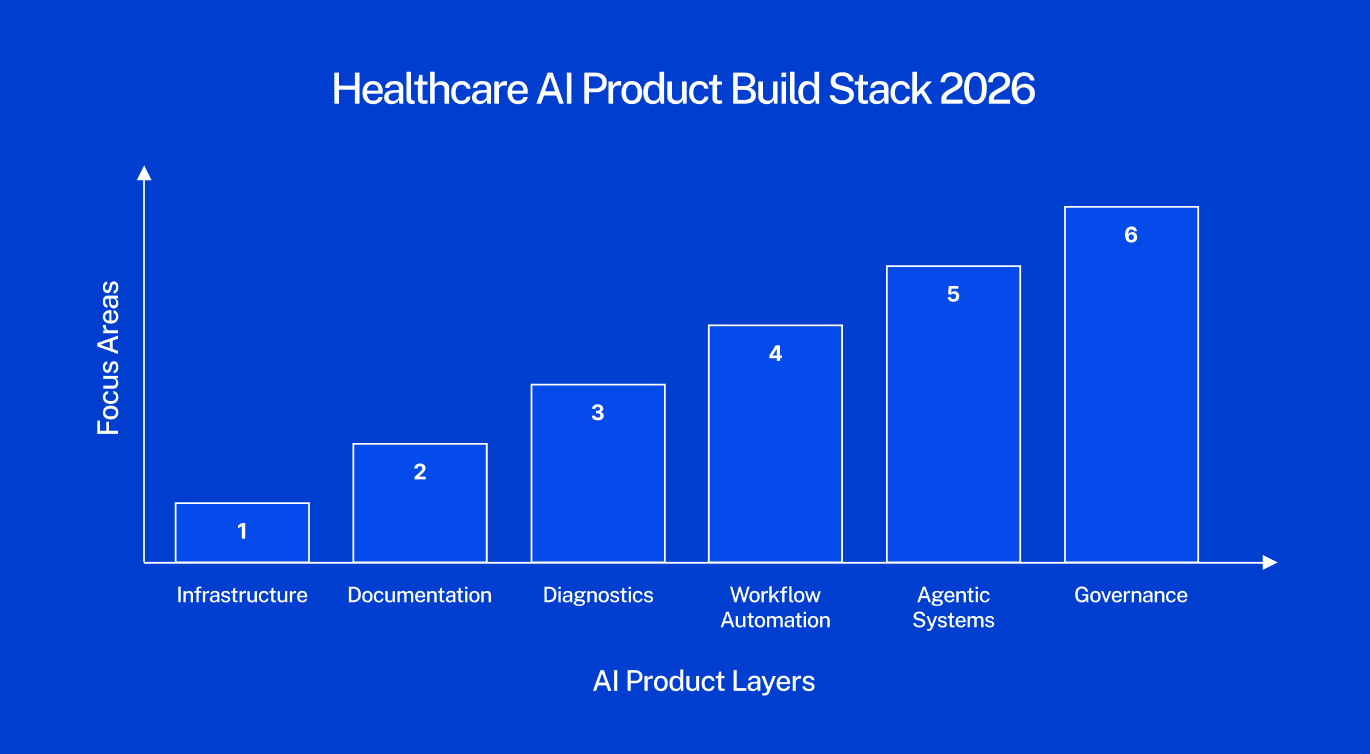

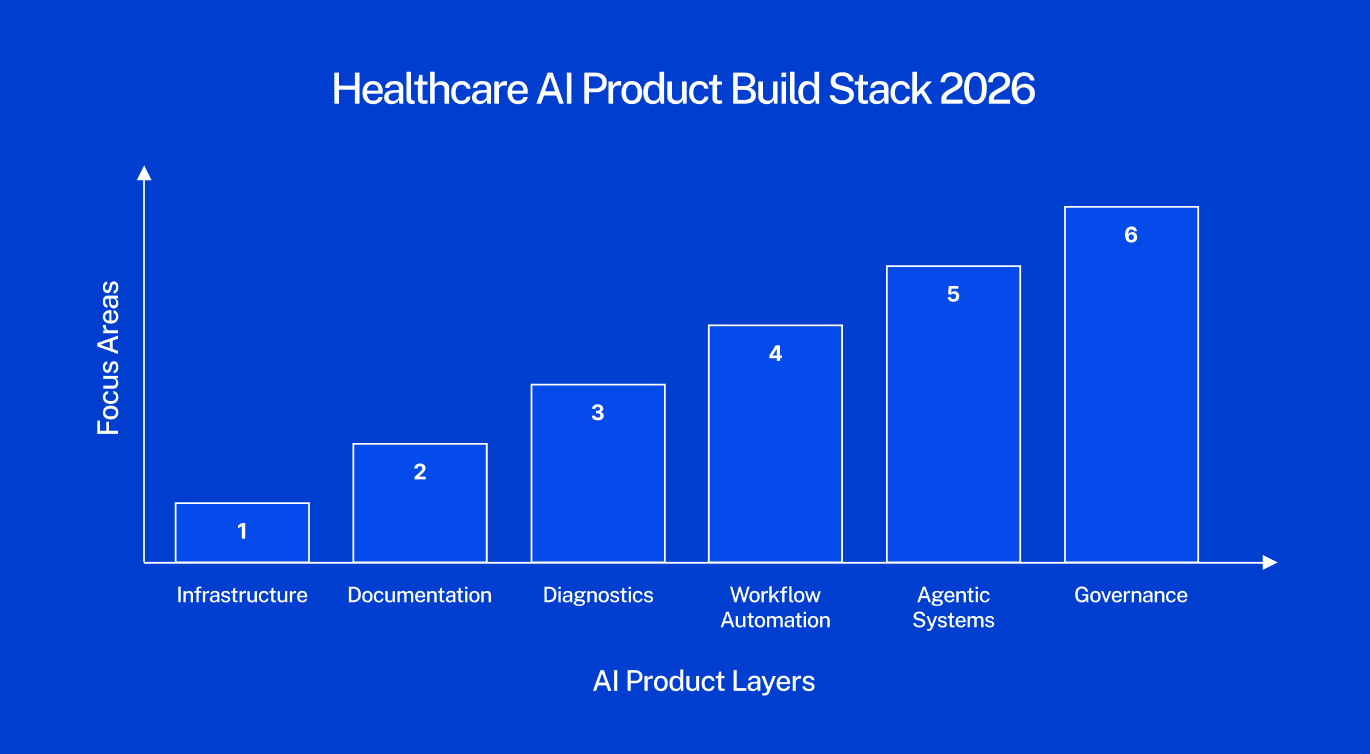

This article maps six active build categories based on where product teams are spending architecture cycles right now. Not trends. Not forecasts. Build decisions.

For context on how AI fits the broader 2026 healthcare software landscape, our healthcare software development trends 2026 guide covers the full stack.

Clinical Documentation: The First AI Category to Reach Saturation

Ambient AI documentation, clinical AI that listens to patient-provider conversations and generates structured notes in real time is no longer a trend. It is the default deployment posture at major U.S. health systems.

A 2025 JAMIA survey of 43 U.S. health systems found that ambient documentation was the only AI use case where 100% of respondents reported adoption activity, making it the single most universally deployed AI application across healthcare. The ambient scribe market generated $600 million in revenue in 2025, a 2.4x year-over-year increase, according to Menlo Ventures' 2025 State of AI in Healthcare report. Mass General Brigham deployed ambient documentation to all its physicians by April 2025, reporting a 20% reduction in clinical burnout. Kaiser Permanente rolled out Abridge's ambient documentation across 40 hospitals and 600+ medical offices — the largest generative AI rollout in healthcare history.

The product implication for teams building in this space: the ambient scribe category itself is commoditizing. Epic, athenahealth (which launched athenaAmbient in early 2026), Oracle Health, and Meditech have all embedded ambient documentation into their core EHR workflows. The differentiation is no longer in the core AI capability, it is in specialty-specific model tuning, EHR write-back depth, and the compliance infrastructure around the model.

What product teams building documentation AI are focused on: Specialty-tuned models for high-documentation settings such as psychiatry, emergency medicine, and oncology; structured EHR write-back that passes audit; consent management workflows that comply with state wiretapping laws; and mandatory clinician review gates that address False Claims Act exposure from automation bias.

The compliance trap: "Automation bias" — where a clinician signs an AI-generated note without reviewing it creates both malpractice and False Claims Act liability. For any product that generates clinical documentation, the review mechanism is not a UX feature. It is a regulatory requirement that must be designed into the product architecture from day one.

For teams building AI tools that connect to EHR workflows, our breakdown of top EMR integration tools for healthcare product teams covers the integration architecture in detail.

Diagnostic AI: Concentrated in Imaging, Cautious Everywhere Else

As of July 2025, the FDA has cleared over 1,250 AI-enabled medical devices authorized for marketing in the United States, up from 950 devices in August 2024, according to the Bipartisan Policy Center's FDA oversight analysis. Approximately 76% of those clearances, roughly 1,100 devices, sit in radiology. Cardiology is second at around 9%. The remaining specialties share the rest.

This concentration is not arbitrary. Imaging AI has characteristics that make FDA clearance faster and more tractable: clearer clinical ground truth (a tumor is present or it is not), self-contained digital workflows, lower risk profile since the tool supports rather than replaces clinician judgment, and an established predicate device landscape that enables 510(k) clearance paths. Outside imaging, the FDA pathway is harder. Clinical areas like primary care and psychiatry involve unstructured data, significant contextual ambiguity, and higher-stakes decisions, all of which make both development and regulatory review far more complex.

Aidoc's recent FDA clearance for a platform detecting 14 acute conditions from a single CT scan workflow represents a meaningful regulatory shift from single-condition classifiers to multi-condition, workflow-aware platform authorizations. The clearance signals growing FDA appetite for more sophisticated diagnostic AI, but the evidence bar is rising alongside it.

Product teams building diagnostic AI outside imaging need to understand the Predetermined Change Control Plan (PCCP) framework finalized by the FDA in December 2024, which allows manufacturers to pre-specify how AI models will be updated post-market without requiring new submissions for each iteration. For continuously learning models, this is not optional paperwork, it is the regulatory architecture that makes ongoing model improvement legally possible.

What product teams building diagnostic AI are focused on: Imaging analysis tools with specialty-specific performance tuning; PCCP documentation for continuous learning models; multi-modal diagnostic platforms that cross-reference imaging findings with EHR history; and clinical decision support tools that explicitly stay below the FDA's regulatory threshold by advising rather than deciding.

Agentic AI: The Build Phase Is Starting — Deployment Is Still Limited

Agentic AI — systems that autonomously plan and execute multi-step clinical or operational tasks without human initiation of each step is attracting the most investor attention and the most implementation caution simultaneously.

According to ScienceSoft's Q1 2026 Healthcare AI Market Watch, agentic AI adoption in healthcare is growing more slowly than other AI categories. The structural reason: action-based AI systems require deeper system integration rights, broader data access permissions, and much stricter governance frameworks than documentation or diagnostic tools. Most healthcare organizations have not yet built the governance infrastructure required to deploy agents that can autonomously act.

The regulatory frontier is being set right now. ARPA-H launched its ADVOCATE (Agentic AI-Enabled Cardiovascular Care Transformation) program in January 2026, aiming to develop the first FDA-authorized agentic AI system — a patient-facing cardiovascular care agent — through a 39-month program that explicitly includes an FDA authorization pathway, as reported by STAT News. To date, the FDA has cleared zero agentic AI systems. ADVOCATE is designed to create the pathway.

As BCG's 2026 healthcare AI analysis notes, agentic AI represents the next structural shift — from AI that advises to AI that executes. But the distinction between suggesting and acting carries completely different regulatory and liability implications. A clinical AI agent that can modify a medication or schedule a procedure is not a decision support tool. In the FDA's framework, it is a medical device performing a clinical action.

Where teams are actively building agents in 2026: Prior authorization processing, care gap identification and outreach, revenue cycle workflow automation, appointment scheduling, and patient-facing health navigation for lower-acuity tasks. The consistent pattern: agents are being deployed first in administrative and operational workflows where the liability surface is lower and governance requirements are cleaner.

The governance question every team must answer before building an agent: What access rights does the agent need? Who is accountable when the agent acts incorrectly? How is the agent's behavior monitored post-deployment? These are not IT questions. They are product architecture questions — and the answers must be documented before enterprise buyers will evaluate the product.

Building a healthcare AI product and unsure where to start with architecture? Our managed pod has shipped 100+ healthcare products including AI-integrated tools across EMR, telehealth, and clinical workflows. Book a free architecture call →

Revenue Cycle Automation: The Clearest ROI in Healthcare AI

While clinical AI captures most of the attention, revenue cycle management automation is where healthcare AI is delivering the most measurable and fastest financial returns in 2026.

The average ROI on AI in healthcare is $3.20 for every $1 invested, with typical returns realized within 14 months, according to DemandSage's 2026 AI in healthcare statistics analysis. Revenue cycle is the primary driver of that return. The structural reason: prior authorization, denial management, coding, and claims processing are high-volume, rule-based processes — exactly what AI handles reliably. They also require no FDA clearance, which dramatically shortens the path from build to clinical deployment.

CMS launched the WISeR (Wasteful and Inappropriate Service Reduction) model in January 2026, piloting AI-supported prior authorization for traditional Medicare in six states. This is federal infrastructure AI — embedded into the core reimbursement workflow. It signals clearly that AI in revenue cycle is moving from vendor product to regulatory infrastructure.

The Rock Health data reinforces this: the top three funded digital health value propositions in H1 2025 were non-clinical workflow automation ($1.9 billion), clinical workflow tools ($1.9 billion), and data infrastructure ($893 million). Non-clinical workflow — dominated by revenue cycle AI — tied with clinical workflow for the top funding position for the first time in Rock Health's tracking history.

What teams are building in RCM AI: Prior authorization automation with payer-specific rule libraries; denial prediction models that flag high-risk claims before submission; coding suggestion tools with CPT and ICD accuracy tuning; eligibility verification automation; and claims optimization engines that reduce first-pass denial rates.

For teams evaluating how AI-powered RCM tools connect to clinical workflows, our guide on EMR integration approaches for healthcare product teams covers the connectivity architecture.

The Build vs. Buy Decision That Is Defining Healthcare AI in 2026

The build vs. buy question in healthcare AI became significantly more complex in Q1 2026 with Big Tech's aggressive entry into clinical AI. Microsoft launched Copilot Health in March 2026. OpenAI acquired Torch Health — a healthcare data interoperability startup for approximately $100 million in January 2026 and launched ChatGPT Health with longitudinal medical memory. Amazon and Anthropic have deepened their healthcare AI offerings.

For healthtech product teams, this creates a specific architecture question. According to Bessemer Venture Partners' State of Health AI 2026, AI companies captured 55% of all healthtech funding in 2025, up from 37% in 2024. Healthcare AI companies are hitting $100 million ARR in under five years — a pace that previously took a decade. The capital is clearly concentrated, but the competitive dynamics are shifting.

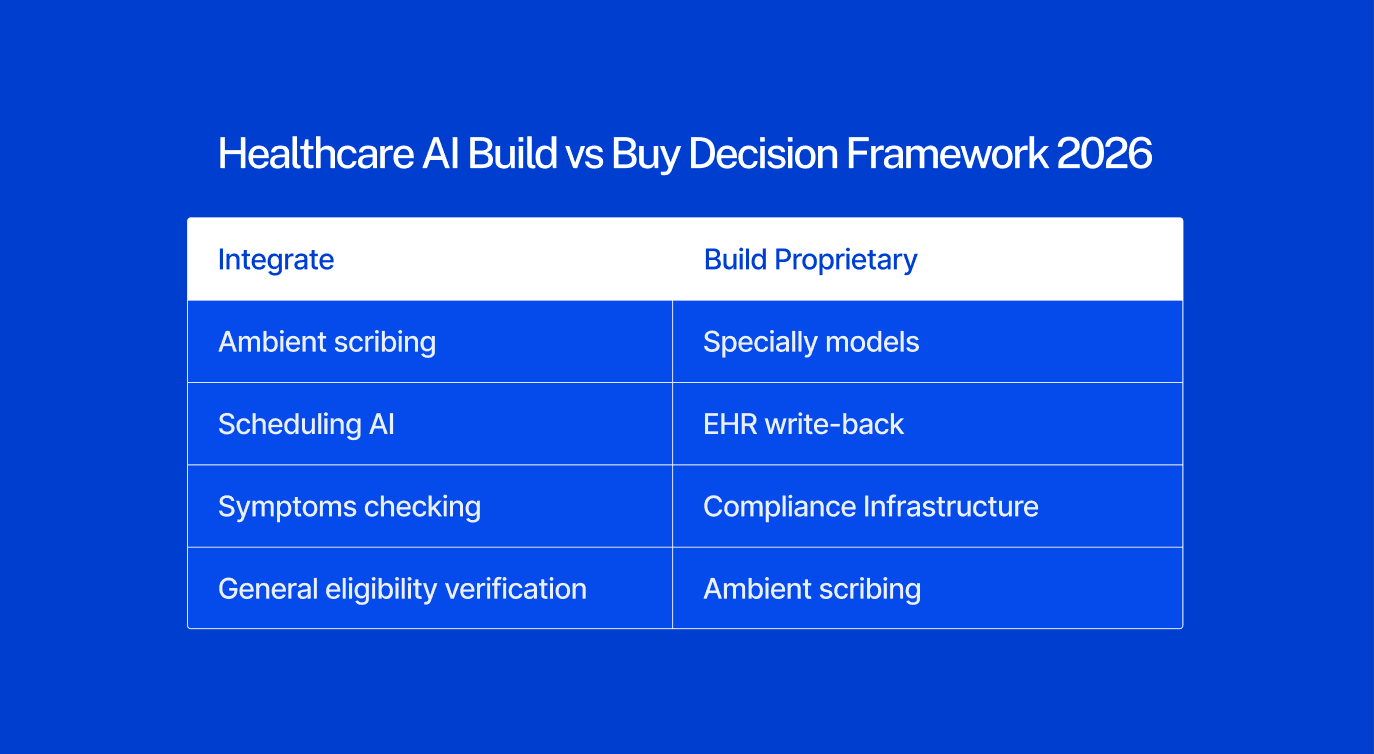

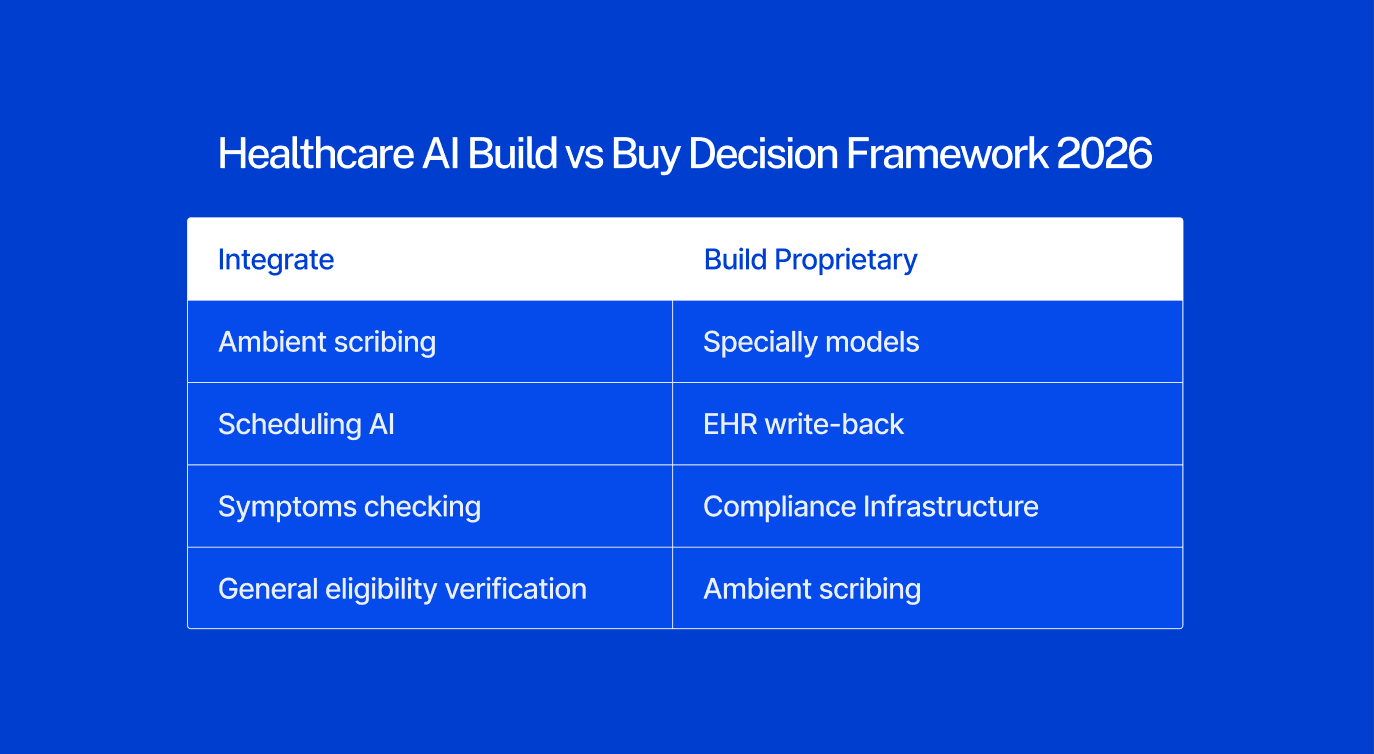

The practical framework for product teams deciding what to build vs. buy in 2026:

Integrate from platform providers when the capability is:

Undifferentiated and available compliantly from major EHR vendors or platform AI providers

In a commoditizing category: basic ambient scribing, appointment scheduling AI, general eligibility verification, symptom checking

Regulatory risk you do not need to own: general-purpose LLM integrations where platform providers have already built compliance infrastructure

Build proprietary when the capability requires:

Proprietary clinical data or speciality-specific training data you control

Deep EHR integration with write-back — not just read access — into specific clinical workflows

Workflow-specific compliance infrastructure (42 CFR Part 2, speciality documentation requirements, specific regulatory frameworks)

A differentiated clinical model specific to your patient population or care context

Governance and auditability infrastructure that enterprise buyers evaluate in procurement

The critical observation: ambient documentation itself is becoming a platform commodity. What differentiates the products winning enterprise contracts today is the compliance layer, the EHR integration depth, and the governance documentation, none of which platform providers deliver out of the box for specialty workflows.

What Separates Healthcare AI Products That Deploy From Those That Stall

The gap between AI healthcare products that reach clinical deployment and those that stall in procurement or pilot is rarely the AI itself. It is the infrastructure around it.

At one U.S. health system, 200 providers spent nearly a year validating an AI documentation tool before it was cleared for full deployment, according to HealthTech Magazine's 2026 healthcare IT leaders survey. That validation process — clinical accuracy review, specialty-specific performance testing, bias assessment, integration verification is not optional for enterprise buyers. It is mandatory, and products that cannot support it will not survive procurement.

Four factors that consistently determine whether a healthcare AI product reaches deployment:

Clinical validation infrastructure. Can the product produce evidence of accuracy, bias assessment, and specialty-specific performance? Health system IT leaders now expect structured validation documentation, not pilot anecdotes. Products without it do not advance past initial procurement review.

EHR integration depth. AI that cannot write back to the EHR in a structured, auditable format cannot function in clinical practice. Read-only integrations do not survive enterprise evaluations. The integration must be bidirectional, compliant, and auditable.

Governance documentation. Who reviews the AI output? Who is accountable when the AI acts incorrectly? How is model drift detected? These questions must be answered in product documentation before a health system will contract. Governance is not a legal department problem, it is a product architecture problem.

State and federal regulatory compliance posture. By mid-2025, over 250 healthcare AI bills had been introduced across more than 34 states, with 33 enacted into law, according to the Manatt Health AI Policy Tracker. The regulatory environment is fragmenting by state. Products not tracking state-level AI transparency and disclosure requirements are accumulating compliance debt that will surface in enterprise procurement evaluations.

The through-line: healthcare AI in 2026 is not failing at the model layer. It is failing at the surrounding infrastructure that health systems need before they will trust a product enough to deploy it.

For product teams building compliance-first healthcare AI, our guide to key compliance requirements for healthcare software covers the architecture decisions in detail.

Where to Go From Here

The six categories above - documentation, diagnostics, agentic systems, revenue cycle, build vs. buy architecture, and deployment infrastructure are where the real product decisions are happening in healthcare AI right now. Not in research papers. Not in investor decks. In architecture reviews, procurement evaluations, and sprint planning sessions at product teams across the U.S.

The teams making progress in 2026 are the ones that treated compliance, governance, and integration architecture as first-order product design constraints, not as post-launch additions. The AI model is rarely the bottleneck. The infrastructure around it is.

If you are building a healthcare AI product and want a direct conversation about which layer to build first, what your compliance posture needs to look like for enterprise procurement, or how to scope the integration architecture before you write the first line of code, book a free 30-minute call with our team. No pitch, just a practical conversation about your build.

Frequently Asked Questions

What AI technologies are healthtech teams actually building in 2026?

The six most active build categories are: ambient clinical documentation (the most universally adopted AI use case, with 100% of surveyed health systems reporting adoption activity per JAMIA); diagnostic AI concentrated in medical imaging (76% of the 1,250+ FDA-cleared AI devices); agentic AI being deployed first in administrative workflows where governance requirements are more manageable; revenue cycle automation including prior authorization, denial management, and coding; build vs. buy architecture decisions driven by Big Tech's entry into healthcare; and deployment infrastructure — clinical validation, EHR write-back, governance documentation — that determines whether AI products survive enterprise procurement.

How is AI changing healthcare product development for smaller healthtech companies in 2026?

The primary shift is that AI capability itself has become less of a differentiator as platform providers and major EHRs have embedded AI into their core products. The competitive advantage for smaller healthtech teams is now in specialty-specific model tuning, EHR integration depth, compliance documentation, and governance frameworks. Teams that built differentiation on generic AI capabilities face commoditization pressure. Teams that built on proprietary clinical data, specialty workflows, or deep EHR integration are holding or expanding their position.

What is agentic AI in healthcare and how does it differ from standard AI tools?

Standard healthcare AI tools — ambient documentation, diagnostic imaging support, risk stratification models — advise or suggest. They surface information that a clinician then acts on. Agentic AI systems plan and execute multi-step tasks autonomously, without requiring a human to initiate each action. A clinical agent might reschedule an appointment, adjust a medication dose, or send a care gap notification without explicit human triggering of that specific action. The distinction matters enormously for regulation and liability. As of April 2026, the FDA has cleared zero agentic clinical AI systems. ARPA-H's ADVOCATE program is working to build the first one through a 39-month FDA authorization pathway for a cardiovascular care agent.

What does it cost to build an AI-powered healthcare product in 2026?

Cost depends heavily on which AI layer you are building and how much you are integrating vs. building proprietary. Enterprise ambient AI scribe platforms with deep EHR integration cost providers $500–$1,500 per clinician per month. Custom clinical AI products requiring specialty-specific model training, deep EHR write-back, compliance documentation, and governance infrastructure add significant build cost on top of foundation model expenses. A realistic estimate for a production-ready, enterprise-deployable healthcare AI product with full compliance, EHR integration, and clinical validation infrastructure ranges from $500,000 to $2 million or more depending on scope and specialty complexity. The compliance and validation infrastructure typically represents 30–50% of total build cost.

How do you get FDA clearance for an AI healthcare product?

FDA clearance depends on the intended use. AI tools used for administrative tasks — scheduling, billing, documentation without diagnostic claims — typically fall outside FDA oversight. AI tools that assist with diagnosis, treatment decisions, or clinical monitoring are regulated as Software as a Medical Device (SaMD). Most cleared AI medical devices have used the 510(k) pathway, which demonstrates substantial equivalence to an already-cleared predicate device and does not require new clinical trials. For new high-risk AI systems without a clear predicate — like agentic clinical AI — De Novo or PMA pathways apply with higher evidence requirements. The FDA's December 2024 Predetermined Change Control Plan guidance is critical for AI products that learn and update post-market, allowing pre-specified model updates without a full resubmission.

In the first half of 2025, AI-enabled healthcare startups captured 62% of all digital health venture funding in the U.S. raising an average of $34.4 million per round, an 83% premium over non-AI peers. Nine of the eleven mega-deals (over $100 million) in that period went to AI-enabled companies, according to Rock Health's H1 2025 digital health funding report. The global AI in healthcare market is valued at $51.2 billion in 2026, forecast to reach $613 billion by 2034, according to Precedence Research.

Those numbers tell you the market is real. What they do not tell you is where the actual product decisions are happening which AI capabilities healthtech teams are building from scratch, which they are buying from platform providers, and which are proving harder to deploy than the investor decks suggested.

This article maps six active build categories based on where product teams are spending architecture cycles right now. Not trends. Not forecasts. Build decisions.

For context on how AI fits the broader 2026 healthcare software landscape, our healthcare software development trends 2026 guide covers the full stack.

Clinical Documentation: The First AI Category to Reach Saturation

Ambient AI documentation, clinical AI that listens to patient-provider conversations and generates structured notes in real time is no longer a trend. It is the default deployment posture at major U.S. health systems.

A 2025 JAMIA survey of 43 U.S. health systems found that ambient documentation was the only AI use case where 100% of respondents reported adoption activity, making it the single most universally deployed AI application across healthcare. The ambient scribe market generated $600 million in revenue in 2025, a 2.4x year-over-year increase, according to Menlo Ventures' 2025 State of AI in Healthcare report. Mass General Brigham deployed ambient documentation to all its physicians by April 2025, reporting a 20% reduction in clinical burnout. Kaiser Permanente rolled out Abridge's ambient documentation across 40 hospitals and 600+ medical offices — the largest generative AI rollout in healthcare history.

The product implication for teams building in this space: the ambient scribe category itself is commoditizing. Epic, athenahealth (which launched athenaAmbient in early 2026), Oracle Health, and Meditech have all embedded ambient documentation into their core EHR workflows. The differentiation is no longer in the core AI capability, it is in specialty-specific model tuning, EHR write-back depth, and the compliance infrastructure around the model.

What product teams building documentation AI are focused on: Specialty-tuned models for high-documentation settings such as psychiatry, emergency medicine, and oncology; structured EHR write-back that passes audit; consent management workflows that comply with state wiretapping laws; and mandatory clinician review gates that address False Claims Act exposure from automation bias.

The compliance trap: "Automation bias" — where a clinician signs an AI-generated note without reviewing it creates both malpractice and False Claims Act liability. For any product that generates clinical documentation, the review mechanism is not a UX feature. It is a regulatory requirement that must be designed into the product architecture from day one.

For teams building AI tools that connect to EHR workflows, our breakdown of top EMR integration tools for healthcare product teams covers the integration architecture in detail.

Diagnostic AI: Concentrated in Imaging, Cautious Everywhere Else

As of July 2025, the FDA has cleared over 1,250 AI-enabled medical devices authorized for marketing in the United States, up from 950 devices in August 2024, according to the Bipartisan Policy Center's FDA oversight analysis. Approximately 76% of those clearances, roughly 1,100 devices, sit in radiology. Cardiology is second at around 9%. The remaining specialties share the rest.

This concentration is not arbitrary. Imaging AI has characteristics that make FDA clearance faster and more tractable: clearer clinical ground truth (a tumor is present or it is not), self-contained digital workflows, lower risk profile since the tool supports rather than replaces clinician judgment, and an established predicate device landscape that enables 510(k) clearance paths. Outside imaging, the FDA pathway is harder. Clinical areas like primary care and psychiatry involve unstructured data, significant contextual ambiguity, and higher-stakes decisions, all of which make both development and regulatory review far more complex.

Aidoc's recent FDA clearance for a platform detecting 14 acute conditions from a single CT scan workflow represents a meaningful regulatory shift from single-condition classifiers to multi-condition, workflow-aware platform authorizations. The clearance signals growing FDA appetite for more sophisticated diagnostic AI, but the evidence bar is rising alongside it.

Product teams building diagnostic AI outside imaging need to understand the Predetermined Change Control Plan (PCCP) framework finalized by the FDA in December 2024, which allows manufacturers to pre-specify how AI models will be updated post-market without requiring new submissions for each iteration. For continuously learning models, this is not optional paperwork, it is the regulatory architecture that makes ongoing model improvement legally possible.

What product teams building diagnostic AI are focused on: Imaging analysis tools with specialty-specific performance tuning; PCCP documentation for continuous learning models; multi-modal diagnostic platforms that cross-reference imaging findings with EHR history; and clinical decision support tools that explicitly stay below the FDA's regulatory threshold by advising rather than deciding.

Agentic AI: The Build Phase Is Starting — Deployment Is Still Limited

Agentic AI — systems that autonomously plan and execute multi-step clinical or operational tasks without human initiation of each step is attracting the most investor attention and the most implementation caution simultaneously.

According to ScienceSoft's Q1 2026 Healthcare AI Market Watch, agentic AI adoption in healthcare is growing more slowly than other AI categories. The structural reason: action-based AI systems require deeper system integration rights, broader data access permissions, and much stricter governance frameworks than documentation or diagnostic tools. Most healthcare organizations have not yet built the governance infrastructure required to deploy agents that can autonomously act.

The regulatory frontier is being set right now. ARPA-H launched its ADVOCATE (Agentic AI-Enabled Cardiovascular Care Transformation) program in January 2026, aiming to develop the first FDA-authorized agentic AI system — a patient-facing cardiovascular care agent — through a 39-month program that explicitly includes an FDA authorization pathway, as reported by STAT News. To date, the FDA has cleared zero agentic AI systems. ADVOCATE is designed to create the pathway.

As BCG's 2026 healthcare AI analysis notes, agentic AI represents the next structural shift — from AI that advises to AI that executes. But the distinction between suggesting and acting carries completely different regulatory and liability implications. A clinical AI agent that can modify a medication or schedule a procedure is not a decision support tool. In the FDA's framework, it is a medical device performing a clinical action.

Where teams are actively building agents in 2026: Prior authorization processing, care gap identification and outreach, revenue cycle workflow automation, appointment scheduling, and patient-facing health navigation for lower-acuity tasks. The consistent pattern: agents are being deployed first in administrative and operational workflows where the liability surface is lower and governance requirements are cleaner.

The governance question every team must answer before building an agent: What access rights does the agent need? Who is accountable when the agent acts incorrectly? How is the agent's behavior monitored post-deployment? These are not IT questions. They are product architecture questions — and the answers must be documented before enterprise buyers will evaluate the product.

Building a healthcare AI product and unsure where to start with architecture? Our managed pod has shipped 100+ healthcare products including AI-integrated tools across EMR, telehealth, and clinical workflows. Book a free architecture call →

Revenue Cycle Automation: The Clearest ROI in Healthcare AI

While clinical AI captures most of the attention, revenue cycle management automation is where healthcare AI is delivering the most measurable and fastest financial returns in 2026.

The average ROI on AI in healthcare is $3.20 for every $1 invested, with typical returns realized within 14 months, according to DemandSage's 2026 AI in healthcare statistics analysis. Revenue cycle is the primary driver of that return. The structural reason: prior authorization, denial management, coding, and claims processing are high-volume, rule-based processes — exactly what AI handles reliably. They also require no FDA clearance, which dramatically shortens the path from build to clinical deployment.

CMS launched the WISeR (Wasteful and Inappropriate Service Reduction) model in January 2026, piloting AI-supported prior authorization for traditional Medicare in six states. This is federal infrastructure AI — embedded into the core reimbursement workflow. It signals clearly that AI in revenue cycle is moving from vendor product to regulatory infrastructure.

The Rock Health data reinforces this: the top three funded digital health value propositions in H1 2025 were non-clinical workflow automation ($1.9 billion), clinical workflow tools ($1.9 billion), and data infrastructure ($893 million). Non-clinical workflow — dominated by revenue cycle AI — tied with clinical workflow for the top funding position for the first time in Rock Health's tracking history.

What teams are building in RCM AI: Prior authorization automation with payer-specific rule libraries; denial prediction models that flag high-risk claims before submission; coding suggestion tools with CPT and ICD accuracy tuning; eligibility verification automation; and claims optimization engines that reduce first-pass denial rates.

For teams evaluating how AI-powered RCM tools connect to clinical workflows, our guide on EMR integration approaches for healthcare product teams covers the connectivity architecture.

The Build vs. Buy Decision That Is Defining Healthcare AI in 2026

The build vs. buy question in healthcare AI became significantly more complex in Q1 2026 with Big Tech's aggressive entry into clinical AI. Microsoft launched Copilot Health in March 2026. OpenAI acquired Torch Health — a healthcare data interoperability startup for approximately $100 million in January 2026 and launched ChatGPT Health with longitudinal medical memory. Amazon and Anthropic have deepened their healthcare AI offerings.

For healthtech product teams, this creates a specific architecture question. According to Bessemer Venture Partners' State of Health AI 2026, AI companies captured 55% of all healthtech funding in 2025, up from 37% in 2024. Healthcare AI companies are hitting $100 million ARR in under five years — a pace that previously took a decade. The capital is clearly concentrated, but the competitive dynamics are shifting.

The practical framework for product teams deciding what to build vs. buy in 2026:

Integrate from platform providers when the capability is:

Undifferentiated and available compliantly from major EHR vendors or platform AI providers

In a commoditizing category: basic ambient scribing, appointment scheduling AI, general eligibility verification, symptom checking

Regulatory risk you do not need to own: general-purpose LLM integrations where platform providers have already built compliance infrastructure

Build proprietary when the capability requires:

Proprietary clinical data or speciality-specific training data you control

Deep EHR integration with write-back — not just read access — into specific clinical workflows

Workflow-specific compliance infrastructure (42 CFR Part 2, speciality documentation requirements, specific regulatory frameworks)

A differentiated clinical model specific to your patient population or care context

Governance and auditability infrastructure that enterprise buyers evaluate in procurement

The critical observation: ambient documentation itself is becoming a platform commodity. What differentiates the products winning enterprise contracts today is the compliance layer, the EHR integration depth, and the governance documentation, none of which platform providers deliver out of the box for specialty workflows.

What Separates Healthcare AI Products That Deploy From Those That Stall

The gap between AI healthcare products that reach clinical deployment and those that stall in procurement or pilot is rarely the AI itself. It is the infrastructure around it.

At one U.S. health system, 200 providers spent nearly a year validating an AI documentation tool before it was cleared for full deployment, according to HealthTech Magazine's 2026 healthcare IT leaders survey. That validation process — clinical accuracy review, specialty-specific performance testing, bias assessment, integration verification is not optional for enterprise buyers. It is mandatory, and products that cannot support it will not survive procurement.

Four factors that consistently determine whether a healthcare AI product reaches deployment:

Clinical validation infrastructure. Can the product produce evidence of accuracy, bias assessment, and specialty-specific performance? Health system IT leaders now expect structured validation documentation, not pilot anecdotes. Products without it do not advance past initial procurement review.

EHR integration depth. AI that cannot write back to the EHR in a structured, auditable format cannot function in clinical practice. Read-only integrations do not survive enterprise evaluations. The integration must be bidirectional, compliant, and auditable.

Governance documentation. Who reviews the AI output? Who is accountable when the AI acts incorrectly? How is model drift detected? These questions must be answered in product documentation before a health system will contract. Governance is not a legal department problem, it is a product architecture problem.

State and federal regulatory compliance posture. By mid-2025, over 250 healthcare AI bills had been introduced across more than 34 states, with 33 enacted into law, according to the Manatt Health AI Policy Tracker. The regulatory environment is fragmenting by state. Products not tracking state-level AI transparency and disclosure requirements are accumulating compliance debt that will surface in enterprise procurement evaluations.

The through-line: healthcare AI in 2026 is not failing at the model layer. It is failing at the surrounding infrastructure that health systems need before they will trust a product enough to deploy it.

For product teams building compliance-first healthcare AI, our guide to key compliance requirements for healthcare software covers the architecture decisions in detail.

Where to Go From Here

The six categories above - documentation, diagnostics, agentic systems, revenue cycle, build vs. buy architecture, and deployment infrastructure are where the real product decisions are happening in healthcare AI right now. Not in research papers. Not in investor decks. In architecture reviews, procurement evaluations, and sprint planning sessions at product teams across the U.S.

The teams making progress in 2026 are the ones that treated compliance, governance, and integration architecture as first-order product design constraints, not as post-launch additions. The AI model is rarely the bottleneck. The infrastructure around it is.

If you are building a healthcare AI product and want a direct conversation about which layer to build first, what your compliance posture needs to look like for enterprise procurement, or how to scope the integration architecture before you write the first line of code, book a free 30-minute call with our team. No pitch, just a practical conversation about your build.

Frequently Asked Questions

What AI technologies are healthtech teams actually building in 2026?

The six most active build categories are: ambient clinical documentation (the most universally adopted AI use case, with 100% of surveyed health systems reporting adoption activity per JAMIA); diagnostic AI concentrated in medical imaging (76% of the 1,250+ FDA-cleared AI devices); agentic AI being deployed first in administrative workflows where governance requirements are more manageable; revenue cycle automation including prior authorization, denial management, and coding; build vs. buy architecture decisions driven by Big Tech's entry into healthcare; and deployment infrastructure — clinical validation, EHR write-back, governance documentation — that determines whether AI products survive enterprise procurement.

How is AI changing healthcare product development for smaller healthtech companies in 2026?

The primary shift is that AI capability itself has become less of a differentiator as platform providers and major EHRs have embedded AI into their core products. The competitive advantage for smaller healthtech teams is now in specialty-specific model tuning, EHR integration depth, compliance documentation, and governance frameworks. Teams that built differentiation on generic AI capabilities face commoditization pressure. Teams that built on proprietary clinical data, specialty workflows, or deep EHR integration are holding or expanding their position.

What is agentic AI in healthcare and how does it differ from standard AI tools?

Standard healthcare AI tools — ambient documentation, diagnostic imaging support, risk stratification models — advise or suggest. They surface information that a clinician then acts on. Agentic AI systems plan and execute multi-step tasks autonomously, without requiring a human to initiate each action. A clinical agent might reschedule an appointment, adjust a medication dose, or send a care gap notification without explicit human triggering of that specific action. The distinction matters enormously for regulation and liability. As of April 2026, the FDA has cleared zero agentic clinical AI systems. ARPA-H's ADVOCATE program is working to build the first one through a 39-month FDA authorization pathway for a cardiovascular care agent.

What does it cost to build an AI-powered healthcare product in 2026?

Cost depends heavily on which AI layer you are building and how much you are integrating vs. building proprietary. Enterprise ambient AI scribe platforms with deep EHR integration cost providers $500–$1,500 per clinician per month. Custom clinical AI products requiring specialty-specific model training, deep EHR write-back, compliance documentation, and governance infrastructure add significant build cost on top of foundation model expenses. A realistic estimate for a production-ready, enterprise-deployable healthcare AI product with full compliance, EHR integration, and clinical validation infrastructure ranges from $500,000 to $2 million or more depending on scope and specialty complexity. The compliance and validation infrastructure typically represents 30–50% of total build cost.

How do you get FDA clearance for an AI healthcare product?

FDA clearance depends on the intended use. AI tools used for administrative tasks — scheduling, billing, documentation without diagnostic claims — typically fall outside FDA oversight. AI tools that assist with diagnosis, treatment decisions, or clinical monitoring are regulated as Software as a Medical Device (SaMD). Most cleared AI medical devices have used the 510(k) pathway, which demonstrates substantial equivalence to an already-cleared predicate device and does not require new clinical trials. For new high-risk AI systems without a clear predicate — like agentic clinical AI — De Novo or PMA pathways apply with higher evidence requirements. The FDA's December 2024 Predetermined Change Control Plan guidance is critical for AI products that learn and update post-market, allowing pre-specified model updates without a full resubmission.

Chinmay Chandgude is a partner at Latent with over 9 years of experience in building custom digital platforms for healthcare and finance sectors. He focuses on creating scalable and secure web and mobile applications to drive technological transformation. Based in Pune, India, Chinmay is passionate about delivering user-centric solutions that improve efficiency and reduce costs.

Related Posts

Free MVP Architecture

Share your product idea — we'll design your MVP architecture for free, no commitment required. If it's a good fit, we'll show you what building it looks like.